Introduction

Lately, with the rise of huge language fashions and AI, we now have seen innumerable developments in pure language processing. Fashions in domains like textual content, code, and picture/video technology have archived human-like reasoning and efficiency. These fashions carry out exceptionally properly basically knowledge-based questions. Fashions like GPT-4o, Llama 2, Claude, and Gemini are educated on publicly out there datasets. They fail to reply area or subject-specific questions which may be extra helpful for numerous organizational duties.

Positive-tuning helps builders and companies adapt and practice pre-trained fashions to a domain-specific dataset that archives excessive accuracy and coherency on domain-related queries. Positive-tuning enhances the mannequin’s efficiency with out requiring in depth computing assets as a result of pre-trained fashions have already discovered the overall textual content from the huge public information.

This weblog will look at why we should fine-tune pre-trained fashions utilizing the Lamini platform. This permits us to fine-tune and consider fashions with out utilizing a lot computational assets.

So, let’s get began!

Studying Goals

- To discover the necessity to Positive-Tune Open-Supply LLMs Utilizing Lamini

- To seek out out using Lamini and underneath directions on fine-tuned fashions

- To get a hands-on understanding of the end-to-end strategy of fine-tuning fashions.

This text was revealed as part of the Knowledge Science Blogathon.

Why Ought to One Positive-Tune Massive Language Fashions?

Pre-trained fashions are primarily educated on huge normal information with a excessive probability of lack of context or domain-specific data. Pre-trained fashions also can end in hallucinations and inaccurate and incoherent outputs. Hottest giant language fashions primarily based on chatbots like ChatGPT, Gemini, and BingChat have repeatedly proven that pre-trained fashions are vulnerable to such inaccuracies. That is the place fine-tuning involves the rescue, which may also help to adapt pre-trained LLMs to subject-specific duties and questions successfully. Different methods to align fashions to your targets embrace immediate engineering and few-shot immediate engineering.

Nonetheless, fine-tuning stays an outperformer on the subject of efficiency metrics. Strategies akin to Parameter environment friendly fine-tuning and Low adaptive rating fine-tuning have additional improved the mannequin fine-tuning and helped builders generate higher fashions. Let’s take a look at how fine-tuning suits in a big language mannequin context.

# Load the fine-tuning dataset

filename = "lamini_docs.json"

instruction_dataset_df = pd.read_json(filename, traces=True)

instruction_dataset_df

# Load it right into a python's dictionary

examples = instruction_dataset_df.to_dict()

# put together a samples for a fine-tuning

if "query" in examples and "reply" in examples:

textual content = examples["question"][0] + examples["answer"][0]

elif "instruction" in examples and "response" in examples:

textual content = examples["instruction"][0] + examples["response"][0]

elif "enter" in examples and "output" in examples:

textual content = examples["input"][0] + examples["output"][0]

else:

textual content = examples["text"][0]

# Utilizing a immediate template to create instruct tuned dataset for fine-tuning

prompt_template_qa = """### Query:

{query}

### Reply:

{reply}"""The above code exhibits that instruction tuning makes use of a immediate template to organize a dataset for instruction tuning and fine-tune a mannequin for a particular dataset. We are able to fine-tune the pre-trained mannequin to a particular use case utilizing such a customized dataset.

The following part will look at how Lamini may also help fine-tune giant language fashions (LLMs) for customized datasets.

The way to Positive-Tune Open-Supply LLMs Utilizing Lamini?

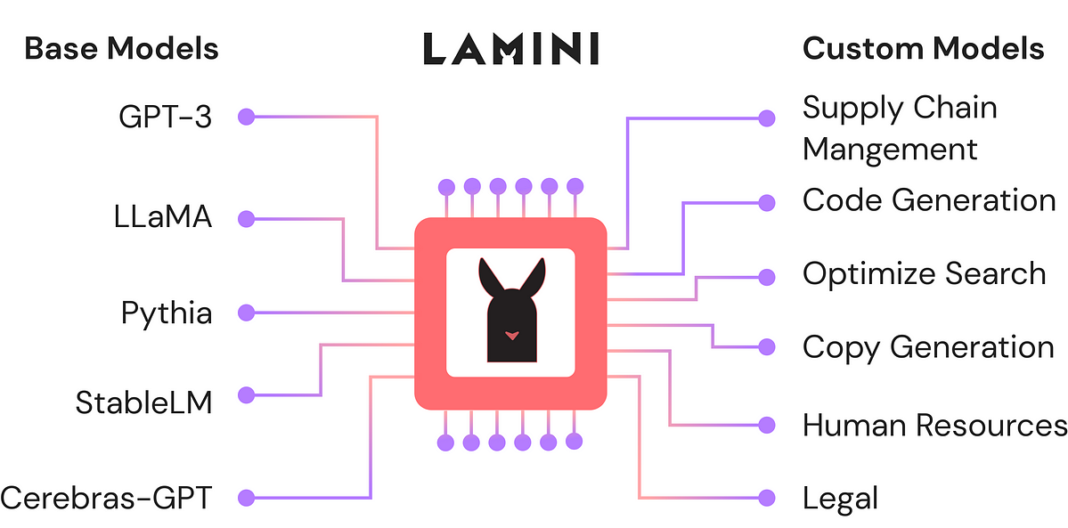

The Lamini platform permits customers to fine-tune and deploy fashions seamlessly with out a lot price and {hardware} setup necessities. Lamini gives an end-to-end stack to develop, practice, tune,e, and deploy fashions at consumer comfort and mannequin necessities. Lamini gives its personal hosted GPU computing community to coach fashions cost-effectively.

Lamini reminiscence tuning instruments and compute optimization assist practice and tune fashions with excessive accuracy whereas controlling prices. Fashions may be hosted wherever, on a personal cloud or by Lamini’s GPU community. Subsequent, we are going to see a step-by-step information to organize information to fine-tune giant language fashions (LLMs) utilizing the Lamini platform.

Knowledge Preparation

Typically, we have to choose a domain-specific dataset for information cleansing, promotion, tokenization, and storage to organize information for any fine-tuning process. After loading the dataset, we preprocess it to transform it into an instruction-tuned dataset. We format every pattern from the dataset into an instruction, query, and reply format to higher fine-tune it for our use circumstances. Try the supply of the dataset utilizing the hyperlink given right here. Let’s take a look at the code instance directions on tuning with tokenization for coaching utilizing the Lamini platform.

import pandas as pd

# load the dataset and retailer it as an instruction dataset

filename = "lamini_docs.json"

instruction_dataset_df = pd.read_json(filename, traces=True)

examples = instruction_dataset_df.to_dict()

if "query" in examples and "reply" in examples:

textual content = examples["question"][0] + examples["answer"][0]

elif "instruction" in examples and "response" in examples:

textual content = examples["instruction"][0] + examples["response"][0]

elif "enter" in examples and "output" in examples:

textual content = examples["input"][0] + examples["output"][0]

else:

textual content = examples["text"][0]

prompt_template = """### Query:

{query}

### Reply:"""

# Retailer fine-tuning examples as an instruction format

num_examples = len(examples["question"])

finetuning_dataset = []

for i in vary(num_examples):

query = examples["question"][i]

reply = examples["answer"][i]

text_with_prompt_template = prompt_template.format(query=query)

finetuning_dataset.append({"query": text_with_prompt_template,

"reply": reply})Within the above instance, we now have formatted “questions” and “solutions” in a immediate template and saved them in a separate file for tokenization and padding earlier than coaching the LLM.

Tokenize the Dataset

# Tokenization of the dataset with padding and truncation

def tokenize_function(examples):

if "query" in examples and "reply" in examples:

textual content = examples["question"][0] + examples["answer"][0]

elif "enter" in examples and "output" in examples:

textual content = examples["input"][0] + examples["output"][0]

else:

textual content = examples["text"][0]

# padding

tokenizer.pad_token = tokenizer.eos_token

tokenized_inputs = tokenizer(

textual content,

return_tensors="np",

padding=True,

)

max_length = min(

tokenized_inputs["input_ids"].form[1],

2048

)

# truncation of the textual content

tokenizer.truncation_side = "left"

tokenized_inputs = tokenizer(

textual content,

return_tensors="np",

truncation=True,

max_length=max_length

)

return tokenized_inputsThe above code takes the dataset samples as enter for padding and truncation with tokenization to generate preprocessed tokenized dataset samples, which can be utilized for fine-tuning pre-trained fashions. Now that the dataset is prepared, we are going to look into the coaching and analysis of fashions utilizing the Lamini platform.

Positive-Tuning Course of

Now that we now have a dataset ready in an instruction-tuning format, we are going to load the dataset into the atmosphere and fine-tune the pre-trained LLM mannequin utilizing Lamini’s easy-to-use coaching methods.

Establishing an Atmosphere

To start the fine-tuning open-source LLMs Utilizing Lamini, we should first be certain that our code atmosphere has appropriate assets and libraries put in. We should guarantee you’ve an acceptable machine with adequate GPU assets and set up obligatory libraries akin to transformers, datasets, torches, and pandas. You need to securely load atmosphere variables like api_url and api_key, usually from atmosphere recordsdata. You should use packages like dotenv to load these variables. After making ready the atmosphere, load the dataset and fashions for coaching.

import os

from lamini import Lamini

lamini.api_url = os.getenv("POWERML__PRODUCTION__URL")

lamini.api_key = os.getenv("POWERML__PRODUCTION__KEY")

# import obligatory library and cargo the atmosphere recordsdata

import datasets

import tempfile

import logging

import random

import config

import os

import yaml

import time

import torch

import transformers

import pandas as pd

import jsonlines

# Loading transformer structure and [[

from utilities import *

from transformers import AutoTokenizer

from transformers import AutoModelForCausalLM

from transformers import TrainingArguments

from transformers import AutoModelForCausalLM

from llama import BasicModelRunner

logger = logging.getLogger(__name__)

global_config = NoneLoad Dataset

After setting up logging for monitoring and debugging, prepare your dataset using datasets or other data handling libraries like jsonlines and pandas. After loading the dataset, we will set up a tokenizer and model with training configurations for the training process.

# load the dataset from you local system or HF cloud

dataset_name = "lamini_docs.jsonl"

dataset_path = f"/content/{dataset_name}"

use_hf = False

# dataset path

dataset_path = "lamini/lamini_docs"Set up model, training config, and tokenizer

Next, we select the model for fine-tuning open-source LLMs Using Lamini, “EleutherAI/pythia-70m,” and define its configuration under training_config, specifying the pre-trained model name and dataset path. We initialize the AutoTokenizer with the model’s tokenizer and set padding to the end-of-sequence token. Then, we tokenize the data and split it into training and testing datasets using a custom function, tokenize_and_split_data. Finally, we instantiate the base model using AutoModelForCausalLM, enabling it to perform causal language modeling tasks. Also, the below code sets up compute requirements for our model fine-tuning process.

# model name

model_name = "EleutherAI/pythia-70m"

# training config

training_config = {

"model": {

"pretrained_name": model_name,

"max_length" : 2048

},

"datasets": {

"use_hf": use_hf,

"path": dataset_path

},

"verbose": True

}

# setting up auto tokenizer

tokenizer = AutoTokenizer.from_pretrained(model_name)

tokenizer.pad_token = tokenizer.eos_token

train_dataset, test_dataset = tokenize_and_split_data(training_config, tokenizer)

# set up a baseline model from lamini

base_model = Lamini(model_name)

# gpu parallization

device_count = torch.cuda.device_count()

if device_count > 0:

logger.debug("Select GPU device")

device = torch.device("cuda")

else:

logger.debug("Select CPU device")

device = torch.device("cpu")Setup Training to Fine-Tune, the Model

Finally, we set up training argument parameters with hyperparameters. It includes learning rate, epochs, batch size, output directory, eval steps, sav, warmup steps, evaluation and logging strategy, etc., to fine-tune the custom training dataset.

max_steps = 3

# trained model name

trained_model_name = f"lamini_docs_{max_steps}_steps"

output_dir = trained_model_name

training_args = TrainingArguments(

# Learning rate

learning_rate=1.0e-5,

# Number of training epochs

num_train_epochs=1,

# Max steps to train for (each step is a batch of data)

# Overrides num_train_epochs, if not -1

max_steps=max_steps,

# Batch size for training

per_device_train_batch_size=1,

# Directory to save model checkpoints

output_dir=output_dir,

# Other arguments

overwrite_output_dir=False, # Overwrite the content of the output directory

disable_tqdm=False, # Disable progress bars

eval_steps=120, # Number of update steps between two evaluations

save_steps=120, # After # steps model is saved

warmup_steps=1, # Number of warmup steps for learning rate scheduler

per_device_eval_batch_size=1, # Batch size for evaluation

evaluation_strategy="steps",

logging_strategy="steps",

logging_steps=1,

optim="adafactor",

gradient_accumulation_steps = 4,

gradient_checkpointing=False,

# Parameters for early stopping

load_best_model_at_end=True,

save_total_limit=1,

metric_for_best_model="eval_loss",

greater_is_better=False

)After setting the training arguments, the system calculates the model’s floating-point operations per second (FLOPs) based on the input size and gradient accumulation steps. Thus giving insight into the computational load. It also assesses memory usage, estimating the model’s footprint in gigabytes. Once these calculations are complete, a Trainer initializes the base model, FLOPs, total training steps, and the prepared datasets for training and evaluation. This setup optimizes the training process and enables resource utilization monitoring, critical for efficiently handling large-scale model fine-tuning. At the end of training, the fine-tuned model is ready for deployment on the cloud to serve users as an API.

# model parameters

model_flops = (

base_model.floating_point_ops(

{

"input_ids": torch.zeros(

(1, training_config["model"]["max_length"])

)

}

)

* training_args.gradient_accumulation_steps

)

print(base_model)

print("Reminiscence footprint", base_model.get_memory_footprint() / 1e9, "GB")

print("Flops", model_flops / 1e9, "GFLOPs")

# Arrange a coach

coach = Coach(

mannequin=base_model,

model_flops=model_flops,

total_steps=max_steps,

args=training_args,

train_dataset=train_dataset,

eval_dataset=test_dataset,

)Conclusion

In conclusion, this text gives an in-depth information to understanding the necessity to fine-tune LLMs utilizing the Lamini platform. It provides a complete overview of why we should fine-tune the mannequin for customized datasets and enterprise use circumstances and the advantages of utilizing Lamini instruments. We additionally noticed a step-by-step information to fine-tuning the mannequin utilizing a customized dataset and LLM with instruments from Lamini. Let’s summarise vital takeaways from the weblog.

Key takeaways

- Studying is required for fine-tuning fashions in opposition to immediate engineering and retrieval augmented technology strategies.

- UUtilizationof platforms like Lamini for easy-to-use {hardware} setup and deployment methods for fine-tuned fashions to serve the consumer necessities

- We’re making ready information for the fine-tuning process and organising a pipeline to coach a base mannequin utilizing a variety of hyperparameters.

The media proven on this article should not owned by Analytics Vidhya and is used on the Writer’s discretion.

Ceaselessly Requested Questions

A. The fine-tuning course of begins with understanding context-specific necessities, dataset preparation, tokenization, and organising coaching setups like {hardware} necessities, coaching configs, and coaching arguments. Finally, a coaching job for mannequin growth is run.

A. Positive-tuning an LLM means coaching a base mannequin on a particular customized dataset. This generates correct and context-relevant outputs for particular queries per the use case.

A. Lamini gives built-in language mannequin fine-tuning, inference, and GPU setup for LLMs’ seamless, environment friendly, and cost-effective growth.